regularization machine learning example

It is a combination of Ridge and Lasso regression. The regularization techniques in machine learning are.

Regularization In Machine Learning Geeksforgeeks

By the process of regularization reduce the complexity of the regression function without.

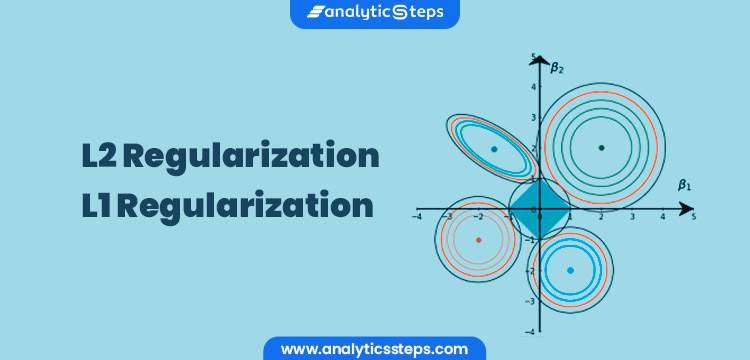

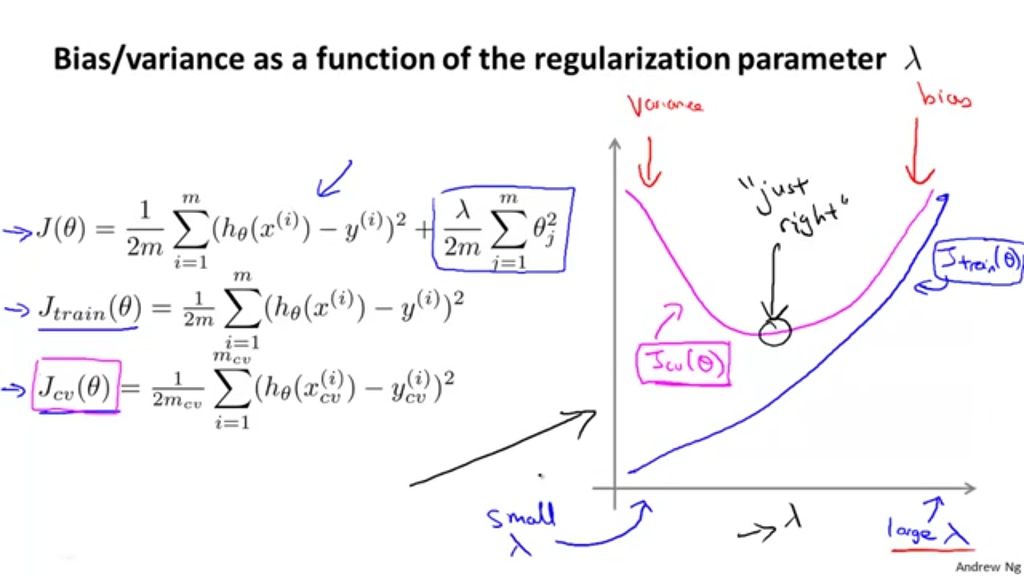

. The general form of a regularization problem is. λ is the regularization rate and it controls the amount of regularization applied to the model. L2 regularization adds a squared penalty term while L1 regularization adds a penalty term based on an absolute value of the model parameters.

Its selected using cross-validation. This allows the model to not overfit the data and follows Occams razor. This article focus on L1 and L2 regularization.

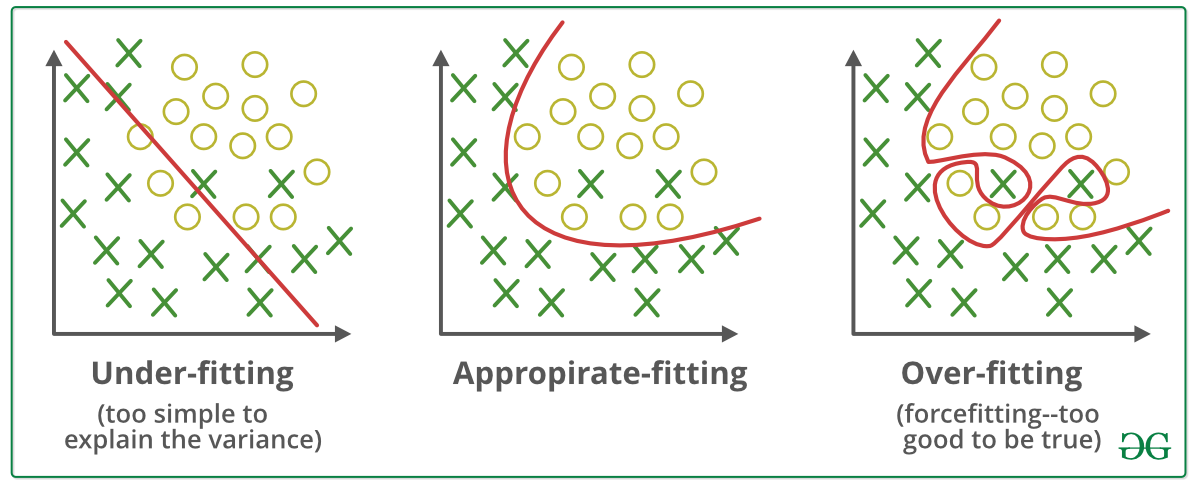

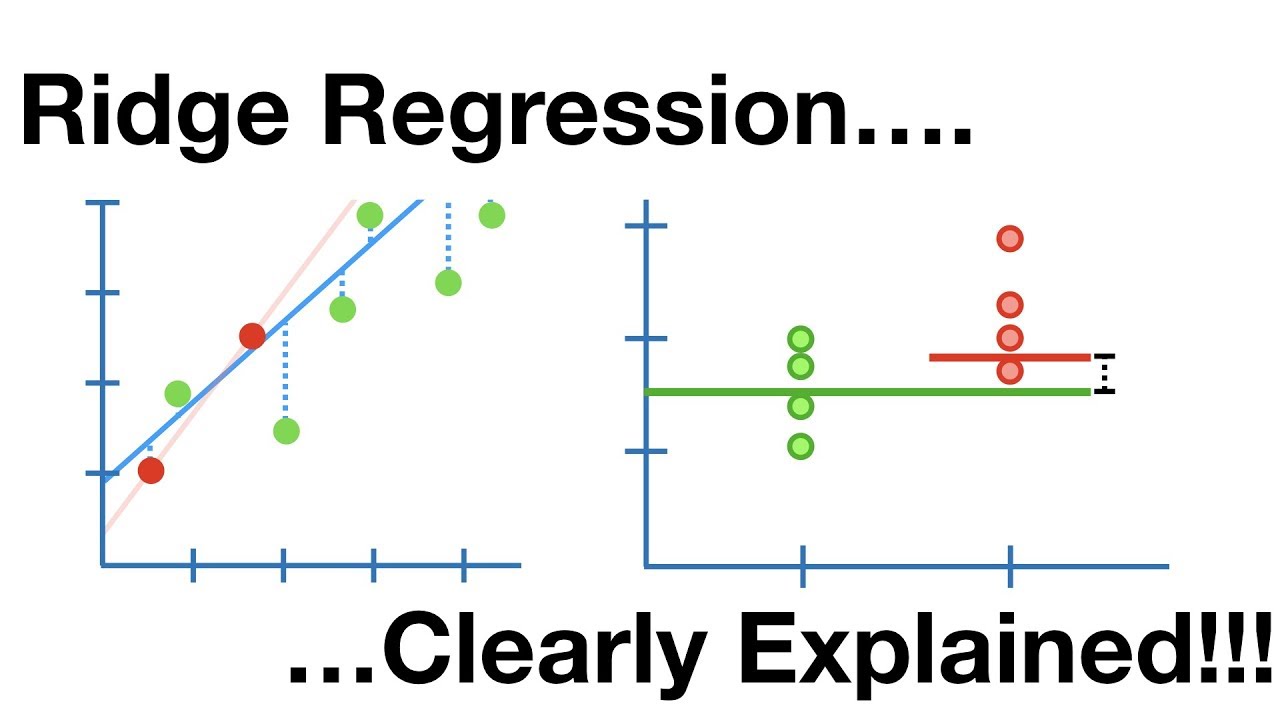

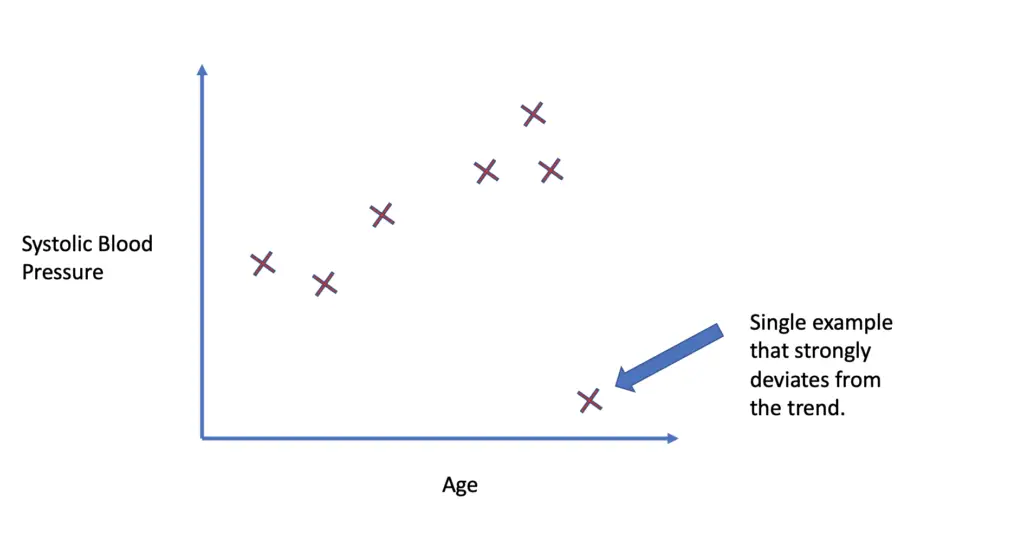

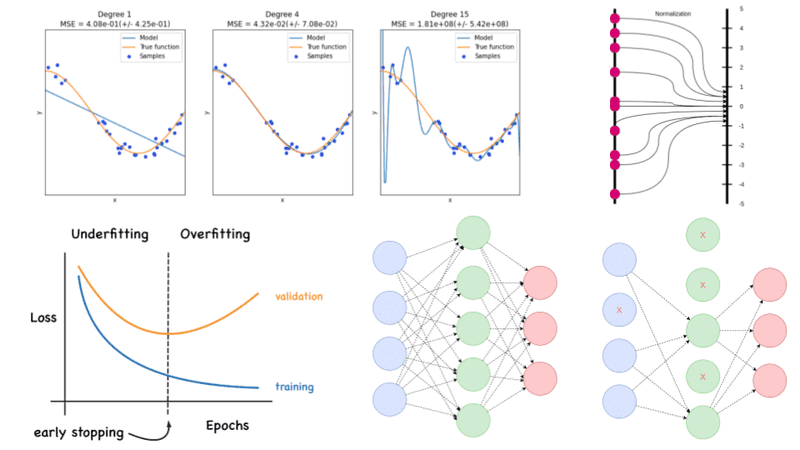

While training a machine learning model the model can easily be overfitted or under fitted. Regularization is a technique used to reduce the errors by fitting the function appropriately on the given training set and avoid overfitting. This penalty controls the model complexity - larger penalties equal simpler models.

Regularization techniques help reduce the chance of overfitting and help us get an optimal model. Both overfitting and underfitting are problems that ultimately cause poor predictions on new data. One of the major aspects of training your machine learning model is avoiding overfitting.

X y J θ. X y λ P a r a m a t e r N o r m. It is a technique to prevent the model from overfitting by adding extra information to it.

In machine learning regularization problems impose an additional penalty on the cost function. Regularization will remove additional weights from specific features and distribute those weights evenly. By Suf Dec 12 2021 Experience Machine Learning Tips.

In this article titled The Best Guide to. By noise we mean the data points that dont really represent. Regularization in Machine Learning.

Regularization is one of the important concepts in Machine Learning. We can regularize machine learning methods through the cost function using L1 regularization or L2 regularization. Red curve is before regularization and blue curve.

Sometimes the machine learning model performs well with the training data but does not perform well with the test data. Poor performance can occur due to either overfitting or underfitting the data. Types of Regularization.

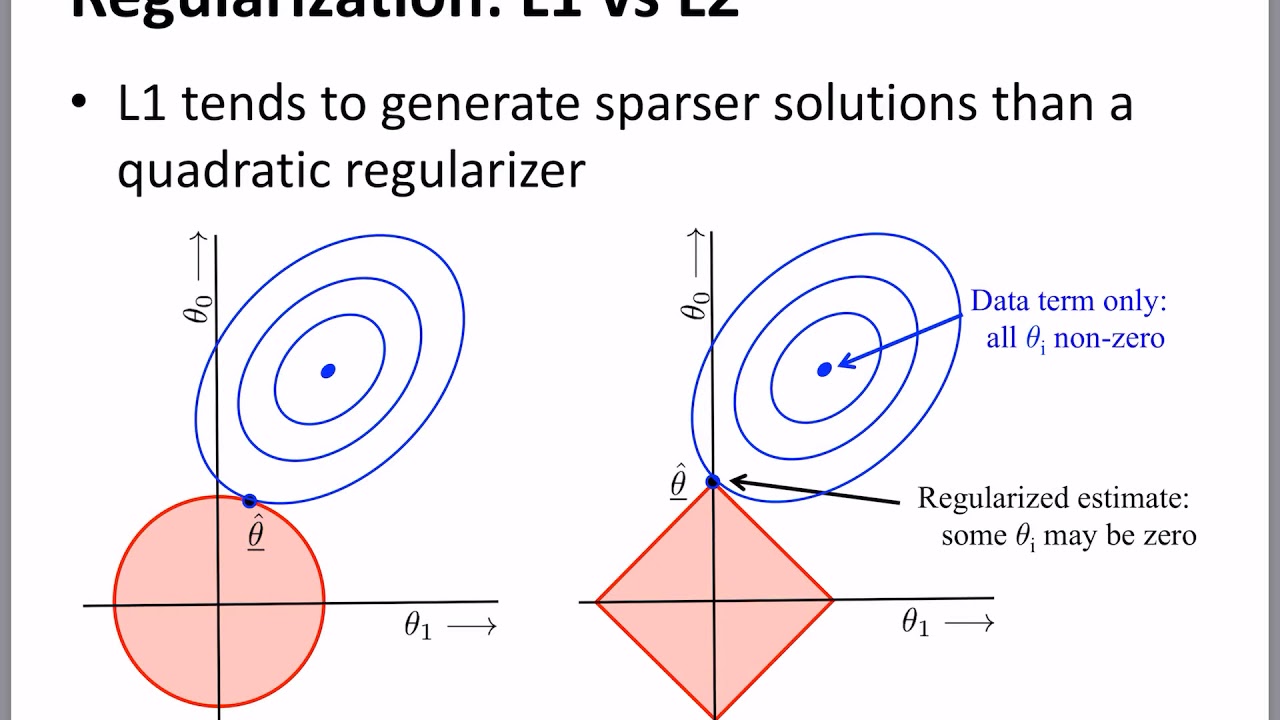

Having the L1 norm. The following represents the modified objective function. A regression model which uses L1 Regularization technique is called LASSO Least Absolute Shrinkage and Selection Operator regression.

Overfitting is a phenomenon where the model. M o d i f i e d J θ. To avoid this we use regularization in machine learning to properly fit a model onto our test set.

This is a cumbersome approach. Regularization is a method to balance overfitting and underfitting a model during training. How well a model fits training data determines how well it performs on unseen data.

A regression model. Regularization helps to solve the problem of overfitting in machine learning. Regularization is a technique to reduce overfitting in machine learning.

Regularization is a method of rescuing a regression model from overfitting by minimizing the value of coefficients of features towards zero. Unseen data Test Data will be having a. The model will have a low accuracy if it is overfitting.

L1 regularization adds an absolute penalty term to the cost function while L2 regularization adds a squared penalty term to the cost function. Regularization is one of the most important concepts of machine learning. A brute force way to select a good value of the regularization parameter is to try different values to train a model and check predicted results on the test set.

This happens because your model is trying too hard to capture the noise in your training dataset. Let us understand how it works. We do this in the context of a simple 1-dim logistic regression model Py 1jxw gw 0 w 1x 1 where gz 1 expf zg 1.

It is a type of regression. We will see how the regularization works and each of these regularization techniques in machine learning below in-depth. You can also reduce the model capacity by driving various parameters to zero.

In the next section we look at how both methods work using linear regression as an example. Based on the approach used to overcome overfitting we can classify the regularization techniques into three categories. 6867 Machine learning 1 Regularization example Well comence here by expanding a bit on the relation between the e ective number of parameter choices and regularization discussed in the lectures.

It deals with the over fitting of the data which can leads to decrease model performance. Regularization in Linear Regression. Regularization is a strategy that prevents overfitting by providing new knowledge to the machine learning algorithm.

In machine learning two types of regularization are commonly used. The Machine Learning Model learns from the given training data which is available fits the model based on the pattern. With the L2 norm.

It is a type of Regression which constrains or reduces the coefficient estimates towards zero. Linear models such as linear regression and logistic regression allow for regularization strategies such as adding parameter norm penalties to the objective function. Regularization helps the model to learn by applying previously learned examples to the new unseen data.

Each regularization method is marked as a strong medium and weak based on how effective the approach is in addressing the issue of overfitting. Overfitting occurs when a machine learning model is tuned to learn the noise in the data rather than the patterns or trends in the data. The simple model is usually the most correct.

θs are the factorsweights being tuned. It means the model is not able to predict the output when. 50 A Simple Regularization Example.

How Does Regularization Work. But here the coefficient values are reduced to zero. In laymans terms the Regularization approach reduces the size of the independent factors while maintaining the same number of variables.

Regularization In Machine Learning Connect The Dots By Vamsi Chekka Towards Data Science

The What When And Why Of Regularization In Machine Learning Dzone Ai

Regularization In Machine Learning Regularization In Java Edureka

L2 Vs L1 Regularization In Machine Learning Ridge And Lasso Regularization

What Is Regularization In Machine Learning Quora

Regularization In Machine Learning Simplilearn

Difference Between L1 And L2 Regularization Implementation And Visualization In Tensorflow Lipman S Artificial Intelligence Directory

Regularization Archives Analytics Vidhya

Which Number Of Regularization Parameter Lambda To Select Intro To Machine Learning 2018 Deep Learning Course Forums

Difference Between L1 And L2 Regularization Implementation And Visualization In Tensorflow Lipman S Artificial Intelligence Directory

A Simple Introduction To Dropout Regularization With Code By Nisha Mcnealis Medium

L1 And L2 Regularization Youtube

Linear Regression 6 Regularization Youtube

Regularization In Machine Learning Simplilearn

Regularization Part 1 Ridge L2 Regression Youtube

Regularization In Machine Learning Programmathically

Regularization Techniques For Training Deep Neural Networks Ai Summer

Regularization Of Linear Models With Sklearn By Robert Thas John Coinmonks Medium